How to become a virtual youtuber

Brief history of virtual YouTuber

On November 29, 2016, Kizuna AI released a video on YouTube for the first time. The virtual idol (Virtual Idol), which was generated and activated with facial and motion capture technology, was born.

Countless latecomers who imitated gradually spawned careers such as Virtual Youtuber-VTuber, Virtual Player and Virtual Liver.

In the next three years, the virtual anchor industry showed a blowout growth. New characters debuted at an average rate of 100 people per month. It was not until the end of the 18th that there was a downward trend. The virtual video master (hereinafter referred to as VTuber) shines on YouTube, Twitch, NicoNico and other stages, setting a record of multiple subscriptions, ratings and viewings. The top A.I.Channel has now captured nearly 2.67 million followers.

It is worth noting that the virtual avatar based on voice synthesis is not a real virtual YouTuber. Behind the virtual host is based on the interpretation of real people, and then through real-time capture technology to control the presentation of the virtual image, and finally presented to your audience through OBS diversion software, this is the real virtual youtuber.

Classification of virtual youtubers

The simple classification is: business and individual. Companies are relatively strong in technology, capital, and operational capabilities. The disadvantage is that companies are restricted by regulations and avatar activities, speeches, and comments are restricted.

Individuals, such as Inuyama Yuhime, Natori Saye, etc., have to rely on themselves or a small group of relatives and friends. They have a high degree of freedom, but they face more difficulties. Although companies have higher starting conditions, not all individuals are inferior to companies.

VTuber’s activity mode will be related to their positioning. Some VTubers will choose to focus on video or live broadcast. Most VTubers are integrated operations, such as attracting attention through live games, enhancing interaction with talk shows, and getting high clicks through cover singing and dancing. This is almost Required items for all VTuber.

Individual VTuber has few restrictions and high degree of freedom, but lacks relevant resources; enterprise VTuber has to face high-intensity planning and arrangement, and the program also needs to follow the rules to complete the rated tasks, and cannot change the itinerary at will.

Difficulties faced by virtual YouTuber

1.High cost of training

High-quality VTuber often requires professional training and professional team guidance. Whether it is a company or an individual, it needs to start from scratch, from the establishment of the personnel to the program content planning, and most of the content can only be combined with existing topics.

2.Insufficient technical ability

Especially for individual VTuber, due to lack of funds, the technical strength is seriously insufficient. The accuracy of the avatar is related to the cost. The higher the accuracy of the model, the greater the production cost, and the higher the requirements for modeling technology.

3.Lack of creativity

After overcoming the first three problems, the last problem is the lack of creativity. This problem is the most serious. If you don't get the audience's approval, the previous work will be useless. At present, the content of many programs is homogenous, and creative programs are needed to attract everyone's attention.

How to become a virtual YouTuber

If you want to become a virtual anchor, you need to meet three conditions and have a 3D virtual character, followed by a middle person, which is the performer, and finally the live streaming software, which is VTuber Software.

So how do they combine?

Behind every VTuber, there is a performer in charge of performing actions, and a CV in charge of dubbing. Of course they can be the same person.

1.Action performance + expression matching

The performer needs to put on the motion capture device, and then, every time the motion capture background performs an action, the performer’s motion data will be recorded. After the performance is completed, the motion data will be saved in a file. We will put these motion data in the same way as manual K The good expression data is synthesized by unity to output the sequence, and the action at this time carries the expression.

Finally, export the green screen version of the action video file. These actions are those actions performed by the virtual anchor when we are watching the virtual idol program.

2.Dubbing

The dubbing file can be obtained by watching the action material dubbing in the recording studio alone, or during the performance, you can bring the headset and perform the action while transmitting the sound to the recording device for storage. This audio file is the dubbing of the virtual anchor.

VTuber Software

In addition to the above-mentioned content, the most critical is the technical link. Of course, for many non-technical personnel, they have no knowledge of technology, so we can help us solve the problem through some VTuber Software. Here I use live3D's virtual live broadcast tools VTuber Maker and VTuber Editor as examples to explain.

I still follow the three conditions mentioned above. First of all, we talk about the 3D model:

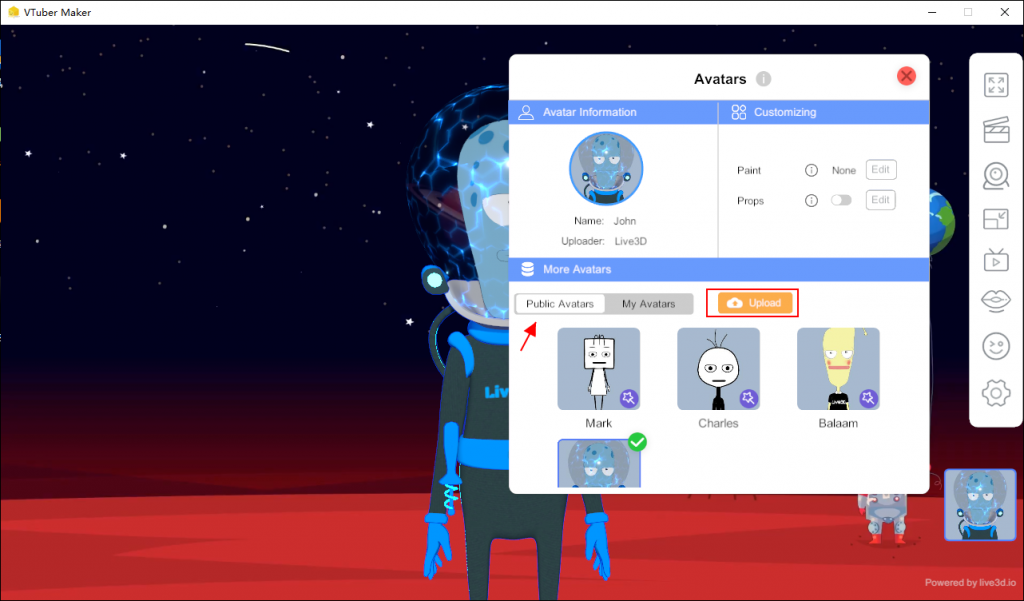

1.There are many public free models for use in VTuber Maker, and we can choose between the normal version and the cute version for the avatar itself.

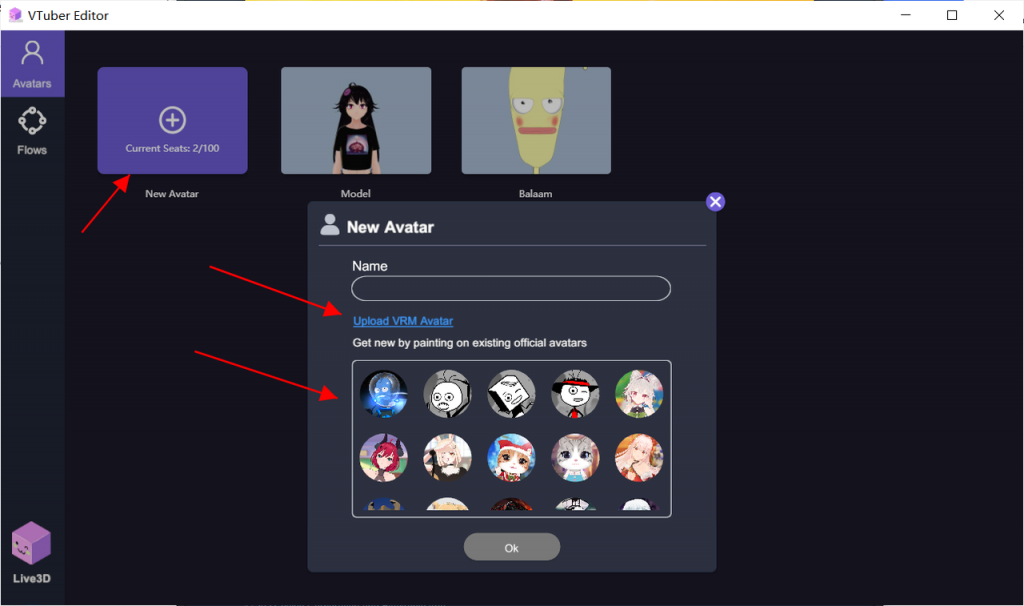

2.Support personalized upload in VTuber Editor. If you have your own VRM model, you can upload the VRM model directly.

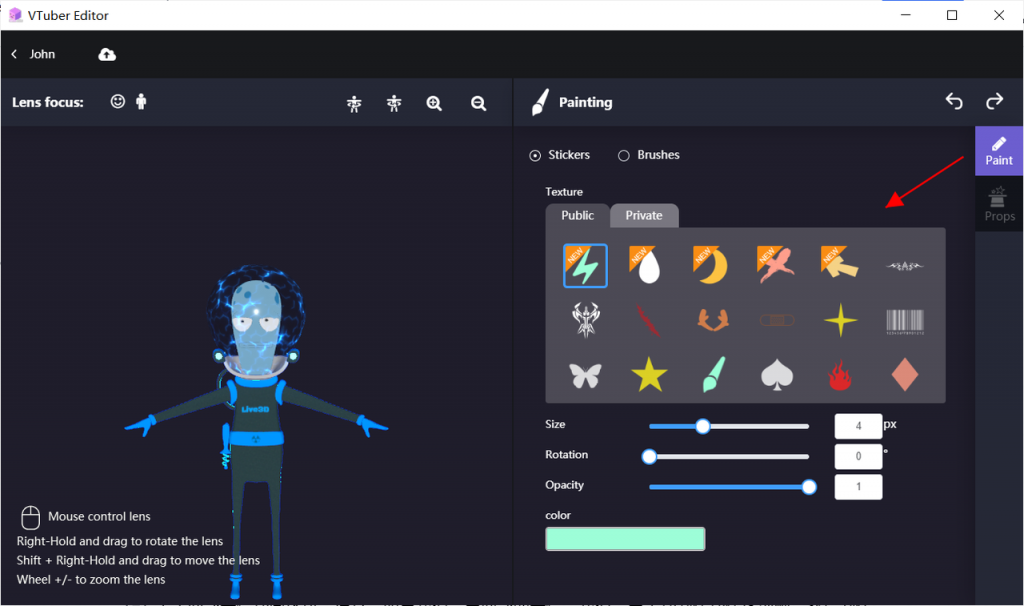

3.Customize the avatar in the VTuber Editor, add some decorations to the image, add a color or icon to the image, and for the character model, we can directly add the preset action animation to the avatar. In addition, you can also define the parameter configuration of the model yourself.

4.Upload or personalize your own model in VTuber Editor, and save it, then use your personalized model in VTuber Maker to start live broadcasting.

Regarding facial expression animation, usually, if we need facial expressions, we need to manually make action sequence frames and then make them into animations. The result is often that they cannot meet other personalized requirements. Live3D's solution is: camera Facial motion capture + voice capture.

Specific steps to use:

Open camera capture -> open camera settings -> recalibrate

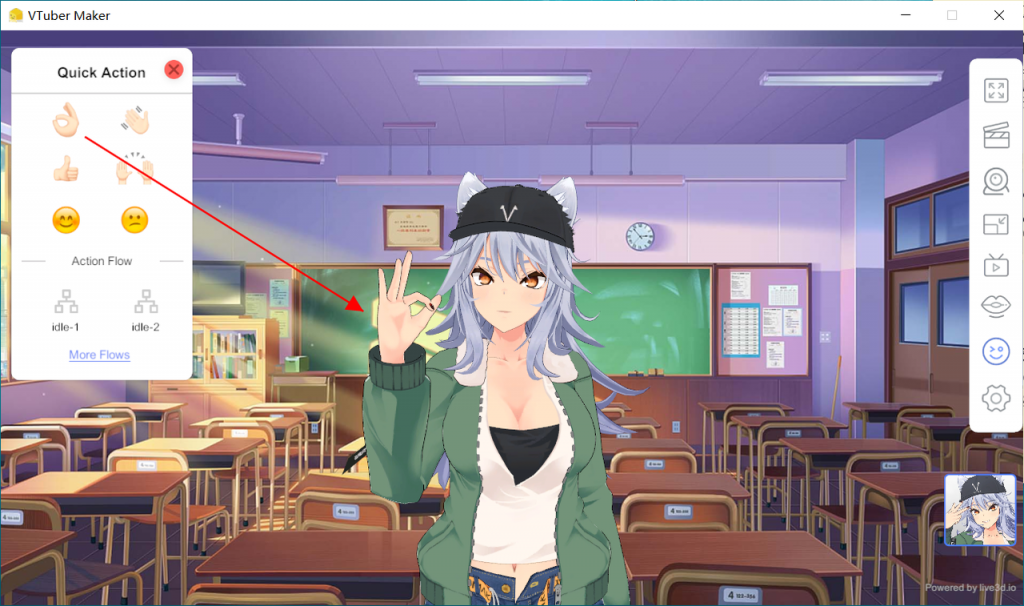

Regarding motion capture, Live3D made simple settings for actions, and made some frequently-used actions into shortcut actions. Just click the shortcut keys to quickly let the virtual character execute.

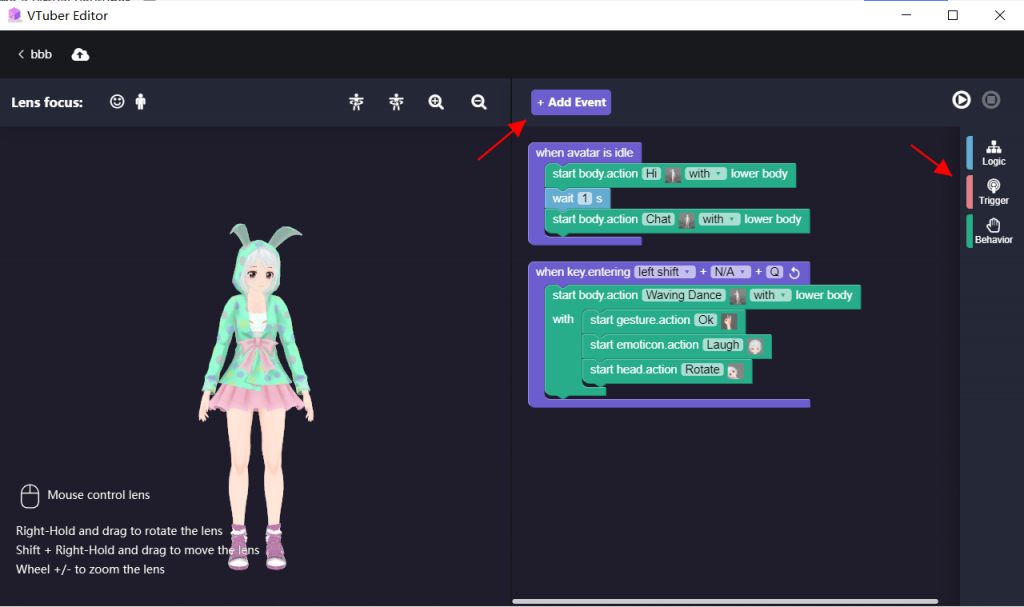

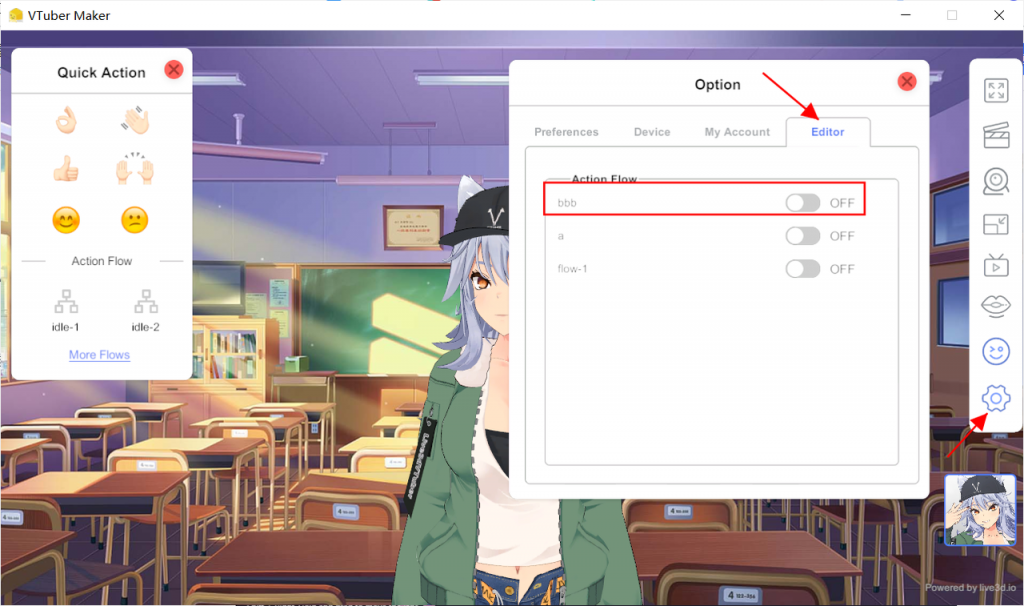

In addition, if you have personalized action needs, Live3D also supports customization. You can edit the preset actions directly in VTuber Editor, and then you can directly use the predefined actions in VTuber Maker, which can be used on virtual characters.

Of course, VTuber Maker also supports capture by external hardware devices, open the virtual electronic camera, link Leap Motiong, complete the upper body head and gesture capture, to achieve the effect required for live broadcast.

Regarding full body motion capture, VTuber Maker is under development…

Start live broadcast

All the above is the performance of the content program, there are two ways, one is to record the program content in advance, and then push it out through the OBS diversion software.

The other is to use a third-party diversion platform or conference platform for real-time diversion and show it to real audiences. It is worth noting that this process can be recorded in advance or direct speeches, but the audience sees virtual characters performing. .

So, how to use VTuber Maker to achieve these two methods? Let's use VTuber Maker for practical demonstration:

1.OBS diversion

To start your performance or play the content you have recorded, open the OBS diversion software and let it capture the interface window you need to divert out, and your fans can watch your performance directly.

2.Use diversion platform or online meeting platform

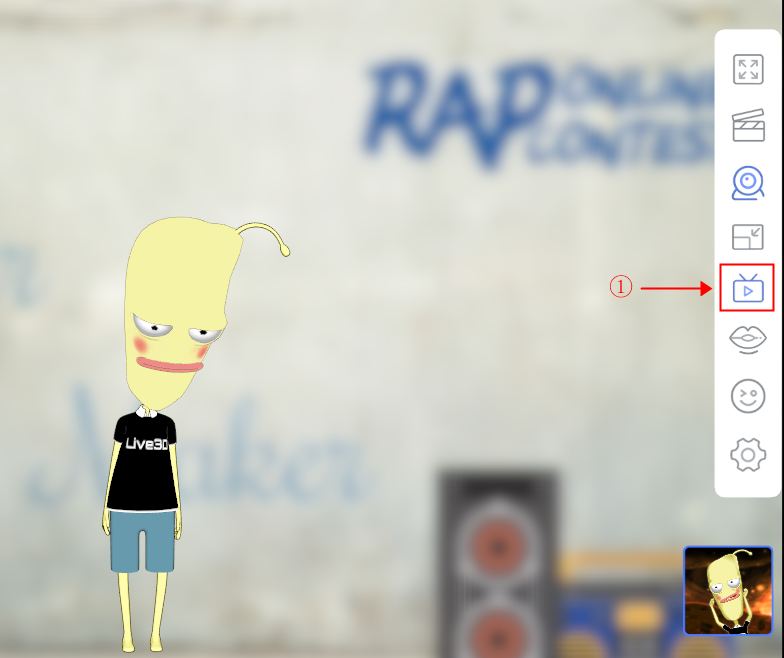

First of all, when everything is ready, you need to open the virtual camera in VTuber Maker, and then turn on the broadcast switch for live broadcast. Take Zoom as an example, directly open a meeting window for online live broadcast.

①Turn on the camera to capture

②Turn on Virtual Broadcast in VTuber Maker and turn on the virtual live broadcast function

③Take Zoom as an example, first turn on the video conference function

④Select the "VTuber Maker Virtual Camera" camera to access the Maker interface.

You can turn on facial capture and Leap Motion capture, and the content of the speech will flow to the conference platform, and then you can perform.

VTuber Maker Connect Virtual and Real World

GET StartFinal summary

How to become a virtual Youtuber? Are you no longer confused after reading this article? Indeed, you only need to prepare a live broadcast software like VTuber Maker, and the other is the creative content of the show you are performing. Of course, if you need to support more motion capture, you need to buy some hardware devices to support motion capture.

The live broadcast tool is to help you achieve the production of content, and the diversion platform directly helps to push the stream to your audience to watch, you are a VTuber.